Written for our “Innovations in HCI and Design” course.

Cognitive Load Theory

For form design, cognitive load theory can be boiled down to the idea that people only have so much space in their brain, so don’t overfill it. The exact amount varies depending on context: is the information auditory or visual?1 What stage of processing are you going through? (Gwizdka 3)

Techniques for Reducing Cognitive Load

- Produce less cognitive load. Intrinsic cognitive load is necessary to what the user is trying to do; extrinsic is work because the design surrounding the goal is bad (Hollender et al. 1279; Feinberg & Murphy 345).

- Use multiple modalities. Mixing visual with auditory, for example, allows users to distribute the cognitive load across multiple cognitive subsystems (Oviatt 4).

- Do the work for them. Pre-filling known fields (i.e., a user’s name and address when they’re already signed in) moves the cognitive load from the user to the computer, saving the user the effort (Gupta et al. 45; Winckler et al. 195).

Cognitive Load in Human-Computer Interaction

Under heavy cognitive load, users work slower, and may commit more errors (Rukzio et al. 3). From a young age, humans are goal-oriented; slowing them down as they work towards these goals, unless explicitly a design goal, can only cause frustration (Klossek et al.). Reducing cognitive load leads to happier users.

Applying Cognitive Load Theory to Form Design

Cognitive load theory gives us several key takeaways:

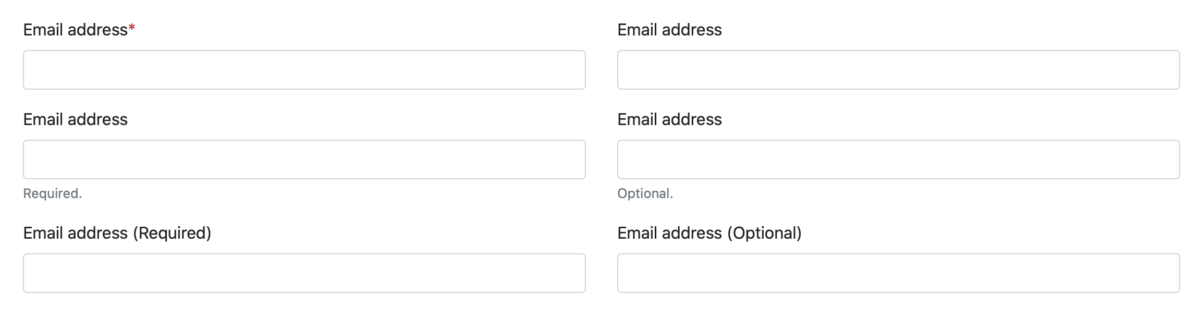

- Indicate which fields are required. Provide a clear indicator of what is required so your users don’t have to guess (Bargas-Avila, Javier A., et al., 20 Guidelines 5).2

- Pre-fill data when possible. Use available sources—an existing account, or on-device sensors—to save the user the effort. However, if that data might not be accurate, don’t guess; leave the field blank to prompt the user to enter the correct data (Rukzio et al. 3-4).

- Don’t interrupt the user by validating data. Real-time validation is fine, as long as it doesn’t force the user to switch from ‘completion mode’ to ‘revision mode’ (Bargas-Avila, Javier A., et al., Useable error messages 5).3

There has not been any research into the combined effects of marking required fields and pre-filling fields; however, we can extend the conclusions in the first two points, above, as such: a required field, even if pre-filled, remains required, and should be marked as such.

Bibliography

Baddeley, Alan D., and Graham Hitch. “Working memory.” Psychology of learning and motivation. Vol. 8. Academic press, 1974. 47-89.

Bargas-Avila, Javier A., et al. “Simple but crucial user interfaces in the World Wide Web: introducing 20 guidelines for usable web form design, user interfaces.” (2010).

Bargas-Avila, Javier A., et al. “Usable error message presentation in the World Wide Web: Do not show errors right away.” Interacting with Computers 19.3 (2007): 330-341.

Budiu, Raluca. Marking Required Fields in Forms. 16 June 2019, www.nngroup.com/articles/required-fields/.

Feinberg, Susan, and Margaret Murphy. “Applying cognitive load theory to the design of web-based instruction.” 18th Annual Conference on Computer Documentation. ipcc sigdoc 2000. Technology and Teamwork. Proceedings. IEEE Professional Communication Society International Professional Communication Conference an. IEEE, 2000.

Gupta, Abhishek, et al. “Simplifying and improving mobile based data collection.” Proceedings of the Sixth International Conference on Information and Communications Technologies and Development: Notes-Volume 2. 2013.

Gwizdka, Jacek. “Distribution of cognitive load in web search.” Journal of the American Society for Information Science and Technology 61.11 (2010): 2167-2187.

Harper, Simon, Eleni Michailidou, and Robert Stevens. “Toward a definition of visual complexity as an implicit measure of cognitive load.” ACM Transactions on Applied Perception (TAP) 6.2 (2009): 1-18.

Hollender, Nina, et al. “Integrating cognitive load theory and concepts of human–computer interaction.” Computers in human behavior 26.6 (2010): 1278-1288.

Klossek, U. M. H., J. Russell, and Anthony Dickinson. “The control of instrumental action following outcome devaluation in young children aged between 1 and 4 years.” Journal of Experimental Psychology: General 137.1 (2008): 39.

Oviatt, Sharon. “Human-centered design meets cognitive load theory: designing interfaces that help people think.” Proceedings of the 14th ACM international conference on Multimedia. 2006.

Pauwels, Stefan L., et al. “Error prevention in online forms: Use color instead of asterisks to mark required-fields.” Interacting with Computers 21.4 (2009): 257-262.

Rukzio, Enrico, et al. “Visualization of uncertainty in context aware mobile applications.” Proceedings of the 8th conference on Human-computer interaction with mobile devices and services. 2006.

Stockman, Tony, and Oussama Metatla. “The influence of screen-readers on web cognition.” Proceeding of Accessible design in the digital world conference (ADDW 2008), York, UK. 2008.

Tullis, Thomas S., and Ana Pons. “Designating required vs. optional input fields.” CHI’97 Extended Abstracts on Human Factors in Computing Systems (1997): 259-260.

Winckler, Marco, et al. “An approach and tool support for assisting users to fill-in web forms with personal information.” Proceedings of the 29th ACM international conference on Design of communication. 2011.

- The foremost theory splits it into three: the phonological loop (sound), the episodic buffer, and the visuospatial scratchpad, all controlled by a central executive (Baddeley & Hitch; the episodic buffer was added by Baddeley in a later revision than that cited here). ↩

- There is some dispute over what makes the best indicator; the general consensus in industry is to use asterisks to mark required fields (Budiu). Studies have shown, however, that using a background color in the field to highlight required fields performs better (Pauwels et al.), which in turn is outperformed by physically separating the required fields from the optional ones (Tullis & Pons). All, however, agree that it is preferable to mark the required fields, rather than the optional. ↩

- Non-interruptive real-time validation, say by adding error messages beneath invalid fields, works well for sighted users. Be aware, however, that screen reader software struggles with dynamically-updating pages (Stockman & Metatla); avert this accessibility problem by providing both real-time and on-demand validation, presenting errors in a modal fashion when the user attempts to submit the form with invalid data. ↩